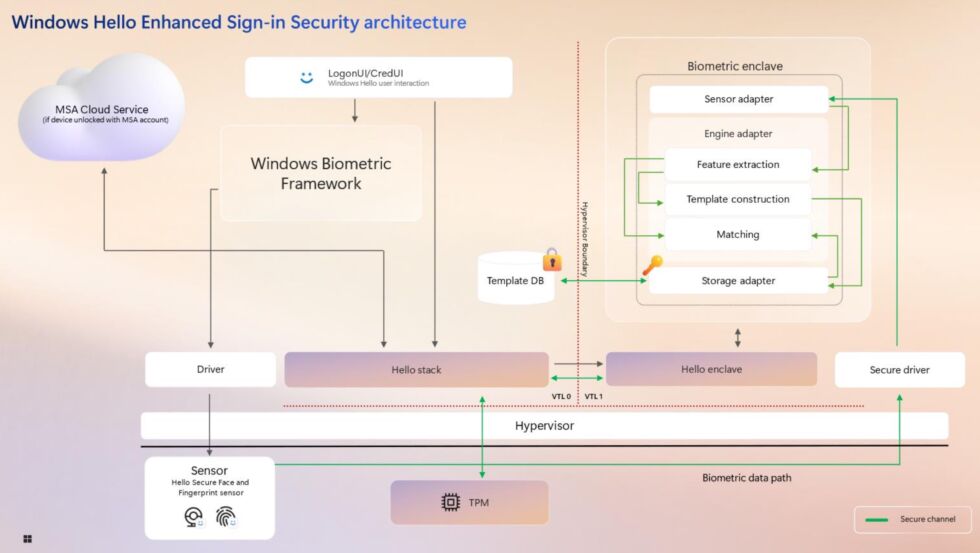

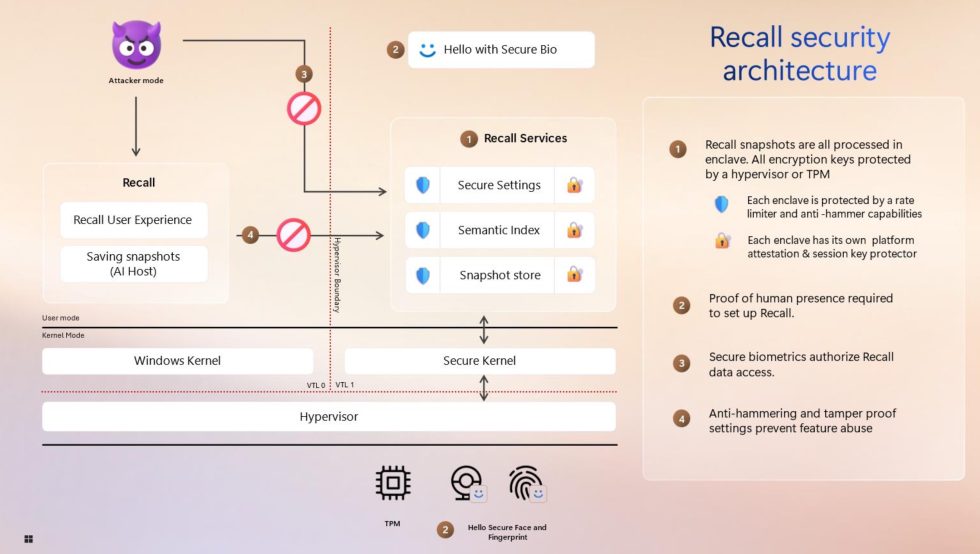

Every time a user pulls up Recall to look through their snapshots, they'll need to use Windows Hello to re-authenticate, and when they set it up, they will need to use biometric authentication like a face-scanning camera or fingerprint reader first. Unlocking Recall with a Windows Hello PIN can only be configured after Recall has already been turned on, and it's intended as "a fallback method" meant to "avoid data loss if a secure sensor is damaged."

Windows Hello only "briefly" decrypts Recall information when users are actually accessing it, and users will need to re-authorize periodically after a timeout period or in between Recall sessions. The encryption keys used to decrypt Recall data "are cryptographically bound to the identity of the end user, sealed by a key derived from the TPM of the hardware platform," which should close the original Recall's most gaping hole: the ability of another user on a PC to easily navigate to a folder in Windows Explorer and see everything stored by Recall.

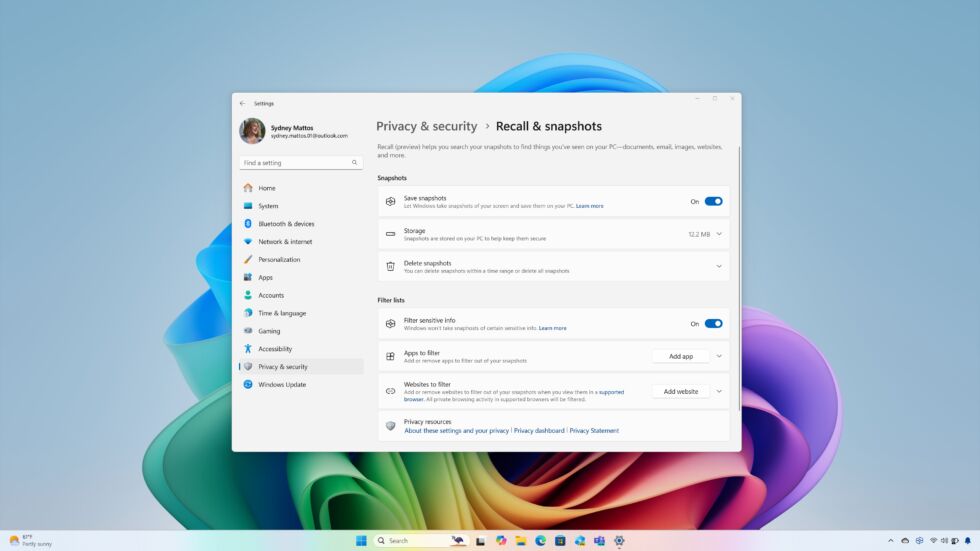

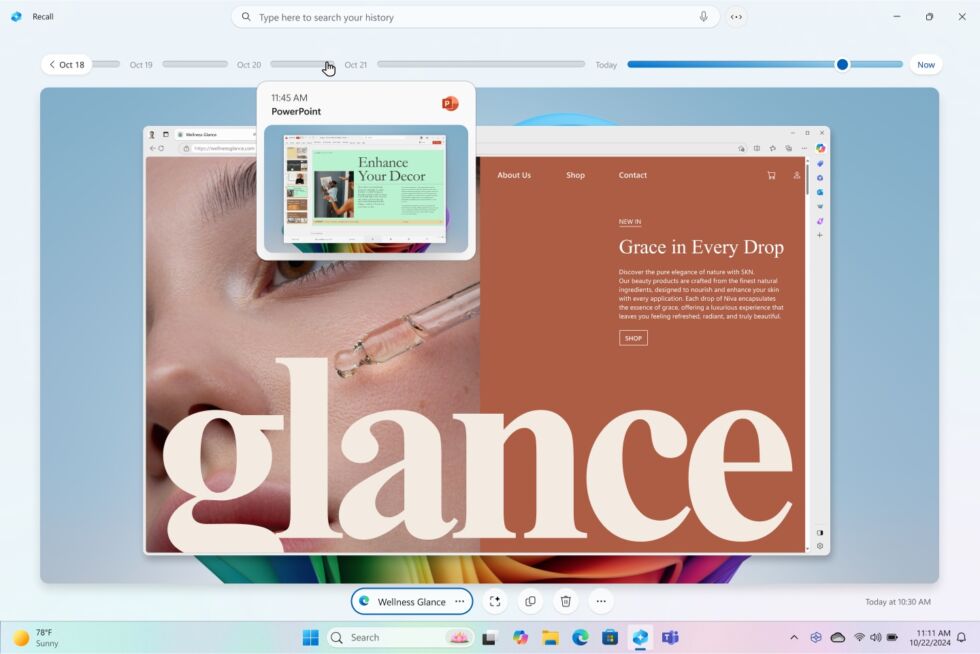

Weston also pointed out a few user settings that can be used to limit what Recall collects—some of these already existed, like controls for how much disk space to use and how long to keep Recall snapshots, the ability to exclude specific apps and websites, that users can choose to delete items from their Recall databases, a system tray icon that tells you when Recall is running, and the fact that most browsers won't be captured when running in private browsing mode.

One, a "sensitive content filtering" feature that attempts to "reduce passwords, national ID numbers, and credit card numbers from being stored in Recall" is new; it's based on something called Microsoft Purview Information Protection that the company offers to its enterprise users.

And while we'll still need to see how the new Recall preview stands up to public scrutiny, Microsoft claims it has had the feature audited more thoroughly this time around: Microsoft's internal Offensive Research and Security Engineering Team "has conducted months of design reviews and penetration testing on Recall," and an unnamed third-party security vendor has also "perform[ed] an independent security design review and penetration test."

The one thing Microsoft's post doesn't talk about is: why the Recall feature nearly launched in its original, unsecured form, why it didn't go through the normal Windows Insider testing channels, and what (if any) internal changes are being made to keep this kind of thing from happening again. We asked Microsoft this question directly but haven't received a response yet.

At around the same time as the initial Recall feature was imploding, Microsoft CEO Satya Nadella had just announced that employees were being told to "do security" when given the choice between launching something quickly or launching something that was secure. Whether this mandate can or will stand up against the company's drive to get as many AI capabilities into all of its products as quickly as possible remains to be seen, but the Recall correction is a step in that direction.

Recall is still just for new PCs

Recall won't be available on the vast majority of Windows PCs—only those that meet the system requirements for the Copilot+ program will be eligible. Those requirements include 16GB of RAM, 256GB of storage, and a neural processing unit (NPU) capable of at least 40 trillion operations per second (TOPS).

For now, that's only Arm Windows PCs with a Snapdragon X Plus or X Elite chip in them, or x86 PCs with Intel's Core Ultra 200V-series chips or AMD's Ryzen AI 300-series chips. These are all chips made for laptops; no company has released a desktop processor that meets the requirements.

Microsoft didn't give a specific timeline for when it would begin rolling Recall out again, but the company had previously announced that it would begin rolling out to Windows Insiders in October.

Listing image by Jason Redmond/AFP via Getty Images

Comments